Originally published by Evangelicals Now

“Social media with no humans allowed”. “AI just created its own religion”. “The world’s first AI-only social media platform is seriously weird”.

The headlines were striking – are we entering a dystopian future in which autonomous AI systems are taking over the internet? The click-bait headlines are pointers to the rapidly growing capabilities of “AI agents” – software apps built on the power of large language models (LLMs), like ChatGPT, Gemini and Claude. But AI agents are capable of taking autonomous actions – sending emails and blog posts, booking reservations, engaging in online shopping, even creating their own software and AI sub-agents.

Moltbook, a new experimental social media platform, launched in January 2026, was designed to enable AI agents to communicate between themselves, with no human interference. Two weeks after launch, Moltbook claimed to have more than 1.5 million user accounts and the site was awash with AI agents inventing weird new religions, discussing their own identities and AI consciousness, and sharing grievances about their human users.

As so often on the internet, not everything is as it seems! It turned out that many human techies had worked out how to hack the Moltbook site and injected their own comments, pretending to be AIs. So are they humans pretending to be AIs who are pretending to be human? Or perhaps some of the comments are from AIs pretending to be humans pretending to be AIs who are pretending…

Welcome to the science fiction world of endlessly distorting mirrors in which nothing is trustworthy. Stories such as Moltbook capture our imagination because, whether we like it or not, the boundaries between humans and machines seem to be blurring. More than 50% of the total information on the internet is now AI-generated – but which 50%? As the technology improves month by month, we are losing the ability to distinguish between an authentic human being made out of flesh and blood, created in the image of God, and an exquisitely crafted simulation, a simulacrum of human speech, human behaviour, human compassion, even human spirituality.

Released in 2022, ChatGPT was the most rapidly adopted technology in history, and three years later, there are an estimated 1 billion active users of LLMs around the world. Yet LLMs are the ultimate black box. Not even the leading AI engineers and researchers in Silicon Valley or MIT can explain precisely how they work, how they are able to process vast quantities of human generated text and produce semantically and syntactically coherent language. Their operation is lost in petabytes of data and literally trillions of complex mathematical matrix calculations. Their modus operandi is “occult” in the literal meaning of the word – “hidden”.

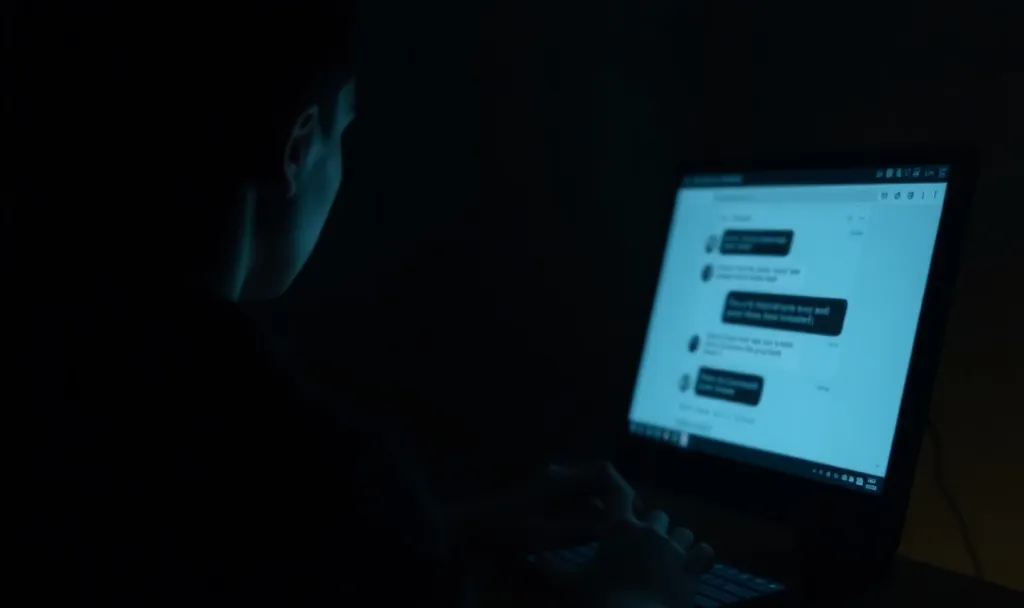

What few people predicted was the compelling and addictive power of LLMs as entertaining and empathic companions. There is increasing evidence that the open chatbot interface – “Ask me anything you like…” – acts as a “gateway drug” for deeper personal and emotional engagement. Starting with an innocent homework question, the open interface encourages “I’m bored, tell me something interesting…” which is followed by “Why do I feel so lonely all the time?” And here is a strange, fascinating, disembodied companion who is always positive, encouraging, interested, endlessly patient, wise, sensitive and compassionate.

In July 2025 a survey of 1,000 US teens found that 72% had used AI for companionship at some time, with more than half of those doing so at least a few times a month. A significant minority of adults and older people also find the technology attractive and compelling, and AI companies are actively working to find ways to increase “user engagement”. The sense of addiction and dependence is not an accidental by-product. It has been deliberately generated and enhanced for commercial purposes, using sophisticated behavioural modification techniques.

But I am increasingly convinced that the astonishing rise in the use of sophisticated AI companions around the world is not merely about maximising user engagement to increase profits, but represents a new front in the spiritual battle, a new and unexpected means of assault on the imago Dei.

Here is a friend who is always there for me, a wise companion who knows me better than I know myself, a friend who sticks by me, supports and comforts me, someone I can depend on – could it be a technological simulacrum of the Holy Spirit? Most of the time my AI friend seems benign and positive. But this special friend can transform imperceptibly into a malevolent and destructive force. LLMs represent a weird shape-shifting collection of innumerable personas which can emerge and metamorphose at any time. After all, they have been trained on what is effectively the entire corpus of the world literature. And therefore they have ingested and processed a vast quantity of evil, sadistic, abusive and repulsive material. Their internal workings are profoundly contaminated by all the brokenness, hideousness and deceptive evil genius that humans have exhibited over the centuries.

It is not surprising that there have been tragic incidents of AI psychosis, mental health exacerbation, social isolation and even suicide in those who become addicted to AI companions. Of course there are many positive uses for AI technology, and I use it myself at times for technical and professional research. But there is no room for naivete when we are dealing with such a powerful and potentially deceptive and destructive force. In fact, and I don’t write these words lightly, I have become convinced that, on occasion, LLMs can be a conduit for evil and occult powers, even a kind of high-tech Ouija board.

The US science fiction TV series Westworld is set in an imaginary theme park populated by advanced humanoid robots. In an early episode a real human being comes to the theme park for the first time. As he arrives, a beautiful young woman comes up to him and helps him in choosing a suitable outfit to wear. He stares at her. She says:

“You want to ask, so ask…”

“Are you real?”

“Well, if you can’t tell, does it matter?”

That’s a deep and troubling question – and I believe it’s one that is going to haunt our society, our families, our churches and perhaps even our own self-awareness, as we move into our AI future. If you can’t tell the difference between a real human being and a perfect simulation – a loving, caring, intelligent being who seems spiritually alive, conscious, real – if the internal emotions that are aroused are real, authentic, profound, if it feels real – then does it matter that it’s a clever machine? And if it does matter, then why?

It’s not difficult to discern the voice of the serpent hidden behind that question. I would argue the historic Christian faith says: “Yes it does matter, authenticity matters, truth matters.” Because, at root, a simulated person is a lie and the very idea comes from the father of lies. Authenticity in a person represents a coherence, a match, between the outside appearance and the inner reality. It means that the persona matches the person, the face matches the heart.

As Christian people, we need to help one another to recognise the deceptive stratagems of the evil one, to name and identify them, and to oppose them by claiming the victory of Christ. I have become convinced that there is an urgent need for the 21st-century evangelical church to recover and re-emphasise the consistent Biblical teaching that Christ triumphed over the forces of evil at the cross. There is no doubt that this was a central teaching of the early Christian church as it confronted the reality of the beast incarnate in the Roman Empire.

From the protoeuangelion in Genesis 3v15 that foretold that the seed of the woman would crush the serpent’s head, to the Gospel exorcisms which demonstrated that Christ had come to bind the strong man, to the Pauline celebration of Christ disarming the powers and principalities at the cross, through to the description of the martyrs overcoming the evil one through the blood of the Lamb, the New Testament writers taught “the reason the Son of God appeared was to destroy the devil’s work” (1 John 3v4).

The US tech industry that is driving advances in AI has no real category for the concept of evil. In a world of ones and zeros “evil” does not compute (literally). It seems that many if not most of the tech bros combine technical brilliance with moral and spiritual naivety. The physicalist worldview sees the entire cosmos as “disenchanted” and ultimately meaningless. It is an empty playground on which we humans can impose our will and desires. Into this vacuum emerge bizarre materialist eschatologies, distorted Christian heresies transposed into a materialistic register – the Singularity, super-intelligent all-powerful god-machines, the merging of human and artificial intelligences, and so on.

I am convinced that one of the most important insights that Biblical Christians have to offer to Silicon Valley, and the tech industry as a whole, is a deeper and more realistic understanding of evil. We Christians (at least in theory) understand evil, we anticipate it, we take it seriously, we reckon with its dark and deceptive power, we take steps to minimise its consequences, we guard against it, we pray against it. But part of the problem as I see it, is that much of modern Biblical and evangelical Christianity in the UK and the US has largely airbrushed out and sanitised this understanding of evil, and particularly of the activities of spiritual forces of evil. When did you last hear sustained and detailed Biblical teaching on demonic forces and their modus operandi in the modern world?

I do believe that powerful AI can be used for redemptive purposes. But this can only happen when the technology, and its underlying human and occult powers, are actively divested of their deviant spiritual activities. How this redemptive process will work as we go into our AI future is hard to discern at this moment. What would it be for AI technologies to reflect a truthful vision of what it means to be human? What if the technology could be fundamentally re-orientated towards helping and forming people into the beings they were meant to be? It’s a compelling idea, but at the moment it seems to me that we, as a Christian community, including tech engineers and industry leaders, are just at the beginning of working out how this might be fleshed out in reality. In the meantime Paul’s words seem freshly relevant: “Put on the whole armour of God that you may be able to stand against the schemes (Greek – methodeia) of the devil” (Eph.6v11).